Research

Normal and Impaired Word Reading

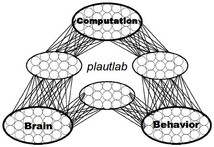

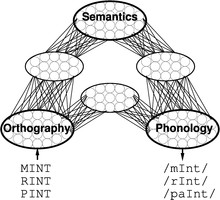

Much of my early work focused on word reading, both in normal skilled readers

and in brain-damaged patients with acquired reading disorders. Word reading

is a particularly informative domain for studying cognitive processes because

it involves learning to relate multiple sources of information—visual

(orthographic), phonological, and semantic—in a highly skilled manner. My

colleagues and I have developed artificial neural-network (connectionist)

models that exhibit many of the central characteristics of skilled reading,

including the influences of word frequency and spelling-sound consistency on

the time to pronounce words and the ability to pronounce word-like nonsense

letter strings (e.g., MAVE) and to distinguish them from real words in lexical

decision tasks (Plaut, McClelland, Seidenberg & Patterson, 1996). When the

models are damaged in various ways, they exhibit the major forms of acquired

dyslexia, including deep dyslexia, in which patients make semantic errors in

reading aloud (e.g., misreading YACHT as “boat”; Plaut & Shallice, 1993) and

surface dyslexia, in which patients produce regularization errors to exception

words (e.g., misreading YACHT as “yatched”; Woollams, Lambon Ralph, Plaut &

Patterson, 2007). Moreover, retraining the damaged models yields patterns of

recovery and generalization that are qualitatively similar to those found in

cognitive rehabilitation studies and has, in one instance (Plaut, 1996),

generated a specific prediction concerning the design of more effective

therapy for patients that later received direct empirical support (Kiran &

Thompson, 2003,

JSLHR).

- Plaut, D.C., and Shallice, T. (1993).

Deep dyslexia: A case study of connectionist neuropsychology.

Cognitive Neuropsychology, 10, 377-500.

- Plaut, D.C., McClelland, J.L., Seidenberg, M.S., and Patterson, K. (1996).

Understanding normal and impaired word reading: Computational

principles in quasi-regular domains.

Psychological Review, 103, 56-115.

- Plaut, D.C. (1996).

Relearning after damage in connectionist networks: Toward a theory

of rehabilitation.

Brain and Language, 52, 25-82.

- Woollams, A., Lambon Ralph, M.A., Plaut, D.C., and Patterson, K. (2007).

SD-squared: On the association between semantic dementia and surface

dyslexia.

Psychological Review, 114, 316-339.

Derivational and Inflectional Morphology

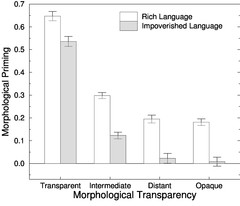

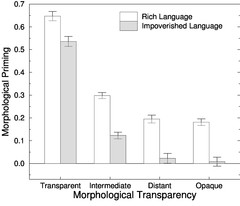

Traditional theories posit that complex words are composed of discrete units

called morphemes that contribute systematically to their meanings (e.g.,

TEACH+ER, GOVERN+MENT), but some words are awkward on this account (e.g.,

DRESS+ER is not someone who dresses; MOTH+ER, FATH+ER, SIST+ER, BROTH+ER and

all agents but the remaining parts are not coherent units). On a distributed

connectionist approach, however, morphology reflects a learned sensitivity to

the graded degree of systematicity among the surface forms of words and their

meanings, without the need to posit discrete segmentation. Explicit

simulations demonstrate that, in accordance with empirical findings (e.g.,

Velan, Frost, Deustch & Plaut, 2005), the degree of sensitivity to apparent

morphological structure in the absence of semantic similarity (e.g., BROTH+ER)

depends on the overall morphological richness of the language as a whole

(Plaut & Gonnerman, 2000). More generally, insights drawn from the

connectionist perspective on morphology and its debate with “rule-based”

accounts---in particular, the English past-tense system---have been

assimilated into many areas in the study of language, changing the focus of

research from abstract characterizations of linguistic competence to an

emphasis on the role of the statistical structure of language in acquisition

and processing (Seidenberg & Plaut, 2014).

- Plaut, D.C., and Gonnerman, L.M. (2000).

Are non-semantic morphological effects incompatible with a distributed

connectionist approach to lexical processing?

Language and Cognitive Processes, 15, 445-485.

- Velan, H., Frost, R., Deustch, A., and Plaut, D.C. (2005).

The processing of root morphemes in Hebrew: Contrasting localist and

distributed accounts.

Language and Cognitive Processes, 20, 169-206.

- Seidenberg, M.S., and Plaut, D.C. (2014).

Quasiregularity and its discontents: The legacy of the past tense debate.

Cognitive Science, 38, 1190-1228.

Semantics and Word Comprehension

Optic aphasia.

Optic aphasia.

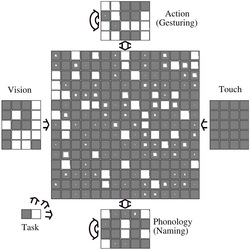

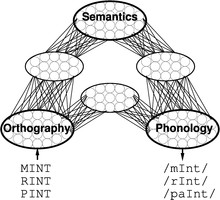

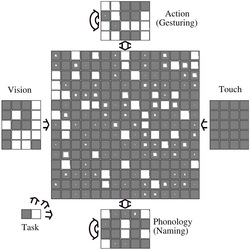

A longstanding debate regarding the representation of semantic knowledge is

whether such knowledge is represented in a single, amodal system or whether it

is organized into multiple subsystems based on modality of input or type of

information. A distributed connectionist perspective offers a middle ground,

in which semantic representations develop under the pressure of learning to

mediate between multiple input and output modalities in performing various

tasks, under a constraint to minimize connection length (and, hence, overall

axon volume). An implemented model provides a quantitative account of optic

aphasia---a selective deficit in naming visually presented objects---following

damage to connections from vision to regions of semantics near phonology

(Plaut, 2002). Additional implementations of the process by which visual

representations activate semantics account for 1) detailed patterns of

semantic priming and how these vary across individuals over the course of

development (Plaut & Booth, 2000); and 2) distinct patterns of impairment in word

and picture comprehension reflecting “access” versus “degraded-store” deficits

(Gotts & Plaut, 2002).

- Plaut, D.C., and Booth, J.R. (2000).

Individual and developmental differences in semantic priming: Empirical and

computational support for a single-mechanism account of lexical processing.

Psychological Review, 107, 786-823.

- Gotts, S.J., and Plaut, D.C. (2002).

The impact of synaptic depression following brain damage: A connectionist

account of "access/refractory" and "degraded-store" semantic impairments.

Cognitive, Affective, and Behavioral Neuroscience, 2,

187-213.

- Plaut, D.C. (2002).

Graded modality-specific specialization in semantics:

A computational account of optic aphasia.

Cognitive Neuropsychology, 19, 603-639.

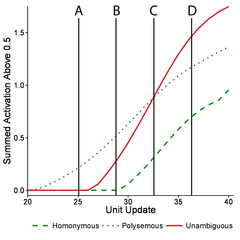

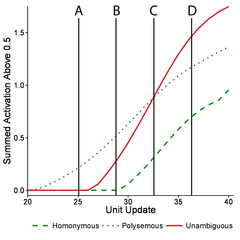

Semantic ambiguity.

Semantic ambiguity.

The meanings of most words depend on the context in

which they occur (e.g.,

vs. BANK). Developing a theory of how

comprehension of semantically ambiguous words are understood is a critical

aspect of any theory of word or discourse comprehension. However, success to

date has been limited by discrepancies in the effects of relatedness of

meaning observed within and between tasks. Further, existing accounts are

underspecified, narrow in scope, and mutually inconsistent. The current work

introduces the semantic settling dynamics account of semantic

ambiguity resolution, in which the discrepant effects are explained by the

temporal settling dynamics in semantics within a neural network, and how these

dynamics interact with the semantic representations of ambiguous words over

time. This account stands as an alternative to one based on the configuration

of the decision system across tasks (Hino, Pexman, & Lupker, 2006, Journal

of Memory and Language). The proposed account reconciles a wide body of

disparate results within a single unified mechanistic account, is supported by

initial investigations that vary processing time to modulate semantic

ambiguity effects, and generates targeted predictions for future

computational, neural, and behavioral research.

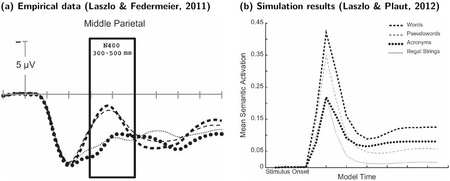

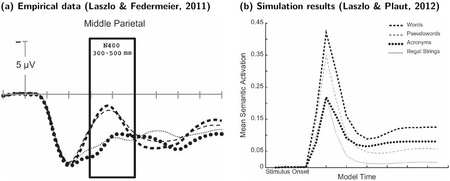

N400.

The study of the N400 event-related brain potential has provided fundamental

insights into the nature of real-time comprehension processes, and its

amplitude is modulated by a wide variety of stimulus and context factors. It

is generally thought to reflect the difficulty of semantic access, but

formulating a precise characterization of this process has proved difficult.

Laszlo and colleagues (Laszlo & Plaut, 2012, Brain and Language,

120, 271-281; Laszlo & Armstrong, 2014, Brain and Language,

132, 22-27) used physiologically constrained neural networks to model the N400

as transient over-activation within semantic representations, arising as a

consequence of the distribution of excitation and inhibition within and between

cortical areas. The current work extends this approach to successfully model

effects on both N400 amplitudes and behavior of word frequency, semantic

richness, repetition, semantic and associative priming, and orthographic

neighborhood size. The account is argued to be preferable to one based on

"semantic prediction error" (Rabovsky & McRae, 2014, Cognition,

132, 68-98) for a number of reasons, the most fundamental of which is

that the current model actually produces N400-like waveforms in its real-time

activation dynamics.

N400.

The study of the N400 event-related brain potential has provided fundamental

insights into the nature of real-time comprehension processes, and its

amplitude is modulated by a wide variety of stimulus and context factors. It

is generally thought to reflect the difficulty of semantic access, but

formulating a precise characterization of this process has proved difficult.

Laszlo and colleagues (Laszlo & Plaut, 2012, Brain and Language,

120, 271-281; Laszlo & Armstrong, 2014, Brain and Language,

132, 22-27) used physiologically constrained neural networks to model the N400

as transient over-activation within semantic representations, arising as a

consequence of the distribution of excitation and inhibition within and between

cortical areas. The current work extends this approach to successfully model

effects on both N400 amplitudes and behavior of word frequency, semantic

richness, repetition, semantic and associative priming, and orthographic

neighborhood size. The account is argued to be preferable to one based on

"semantic prediction error" (Rabovsky & McRae, 2014, Cognition,

132, 68-98) for a number of reasons, the most fundamental of which is

that the current model actually produces N400-like waveforms in its real-time

activation dynamics.

Sequential Behavior

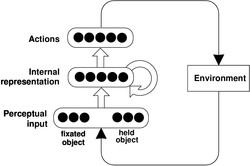

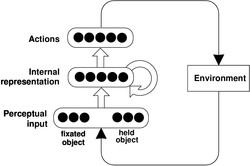

Routine action.

In everyday tasks, selecting actions in the proper sequence requires a

continuously updated representation of temporal context. Many existing models

address this problem by positing a hierarchy of processing units, mirroring

the roughly hierarchical structure of naturalistic tasks themselves. Although

intuitive, such an approach has led to a number of difficulties, including a

reliance on overly rigid sequencing mechanisms and a limited ability to

address both learning and context sensitivity in behavior. A sequential

neural network, by contrast, can to deal flexibly with a complex set of

sequencing constraints, encoding contextual information at multiple

time-scales within a single, distributed internal representation (Botvinick &

Plaut, 2004). The model not only accounts for skilled action performance, but

also everyday “slips of action” that normal individuals commit under

distraction, as well as more severe degradation in performance following

damage, as observed in ideational apraxia. An analogous model in the domain

of language acquisition and processing. accounts for the integration of

semantic and syntactic constraints on sentence processing (Rohde & Plaut,

1999). Finally, the same type of model, at a shorter timescale, provides a

parsimonious account for numerous benchmark phenomena in the domain of

immediate serial recall (Botvinick & Plaut, 2006), including data that have

been considered to preclude the application of neural networks in this domain.

Unlike most competing accounts, the model deals naturally with findings

concerning the role of background knowledge in serial recall, and makes

contact with relevant neuroscientific data.

Routine action.

In everyday tasks, selecting actions in the proper sequence requires a

continuously updated representation of temporal context. Many existing models

address this problem by positing a hierarchy of processing units, mirroring

the roughly hierarchical structure of naturalistic tasks themselves. Although

intuitive, such an approach has led to a number of difficulties, including a

reliance on overly rigid sequencing mechanisms and a limited ability to

address both learning and context sensitivity in behavior. A sequential

neural network, by contrast, can to deal flexibly with a complex set of

sequencing constraints, encoding contextual information at multiple

time-scales within a single, distributed internal representation (Botvinick &

Plaut, 2004). The model not only accounts for skilled action performance, but

also everyday “slips of action” that normal individuals commit under

distraction, as well as more severe degradation in performance following

damage, as observed in ideational apraxia. An analogous model in the domain

of language acquisition and processing. accounts for the integration of

semantic and syntactic constraints on sentence processing (Rohde & Plaut,

1999). Finally, the same type of model, at a shorter timescale, provides a

parsimonious account for numerous benchmark phenomena in the domain of

immediate serial recall (Botvinick & Plaut, 2006), including data that have

been considered to preclude the application of neural networks in this domain.

Unlike most competing accounts, the model deals naturally with findings

concerning the role of background knowledge in serial recall, and makes

contact with relevant neuroscientific data.

- Rohde, D.L.T., and Plaut, D.C. (1999).

Language acquisition in the absence of explicit negative

evidence: How important is starting small?

Cognition, 72, 67-109.

- Botvinick, M., and Plaut, D.C. (2004).

Doing without schema hierarchies: A recurrent connectionist approach to

normal and impaired routine sequential action.

Psychological Review, 111, 395-429.

- Botvinick, M., and Plaut, D.C. (2006).

Short-term memory for serial order: A recurrent neural network model.

Psychological Review, 113, 201-233.

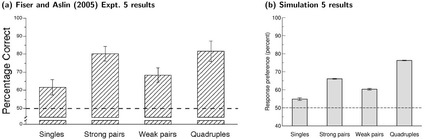

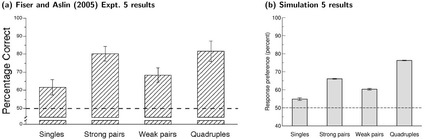

Statistical learning.

Statistical learning is often cast as a means of discovering the units of

perception, such as words and objects, and representing them as explicit

"chunks". However, entities are not undifferentiated wholes but often

contain parts that contribute systematically to their meanings. Studies of

incidental auditory or visual statistical learning suggest that, as

participants learn about wholes they become insensitive to parts embedded

within them (Fiser & Aslin, 2005; Giroux & Rey, 2009), but this seems

difficult to reconcile with a broad range of findings in which parts and

wholes work together to contribute to behavior. In the current work, we adopt

a computational approach, based on learning in artificial neural networks,

that is capable of capturing statistical structure at multiple levels of

representation simultaneously and yet eschews the notion of explicit chunks.

Rather, the extent to which a particular subset of the input in a particular

context is represented in a coherent manner is a matter of degree, and the

extent to which structure at one level of analysis cooperates or competes with

structure at other levels is not prespecified but arises naturally through

incidental learning. We show that the approach accounts for a wide range of

findings concerning the relationship between parts and wholes in auditory and

visual statistical learning, including some previously thought to be

problematic for neural network approaches.

Statistical learning.

Statistical learning is often cast as a means of discovering the units of

perception, such as words and objects, and representing them as explicit

"chunks". However, entities are not undifferentiated wholes but often

contain parts that contribute systematically to their meanings. Studies of

incidental auditory or visual statistical learning suggest that, as

participants learn about wholes they become insensitive to parts embedded

within them (Fiser & Aslin, 2005; Giroux & Rey, 2009), but this seems

difficult to reconcile with a broad range of findings in which parts and

wholes work together to contribute to behavior. In the current work, we adopt

a computational approach, based on learning in artificial neural networks,

that is capable of capturing statistical structure at multiple levels of

representation simultaneously and yet eschews the notion of explicit chunks.

Rather, the extent to which a particular subset of the input in a particular

context is represented in a coherent manner is a matter of degree, and the

extent to which structure at one level of analysis cooperates or competes with

structure at other levels is not prespecified but arises naturally through

incidental learning. We show that the approach accounts for a wide range of

findings concerning the relationship between parts and wholes in auditory and

visual statistical learning, including some previously thought to be

problematic for neural network approaches.

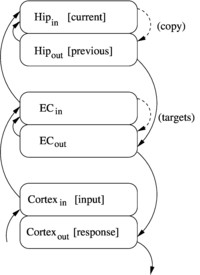

Rapid sequence learning.

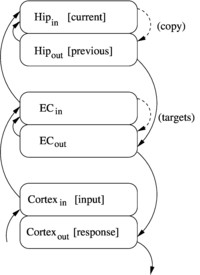

We have developed a model of rapid sequence learning by the hippocampus, and

applied it to account for repetition effects in immediate serial recall (ISR)

and the discovery of structure in auditory statistical learning. The model

supports one-trial learning of novel sequences through fast predictive

learning from sparse but structure-sensitive hippocampal representations of

items and the contexts in which they occur. In the model, the accumulation of

learning effects across trials gives rise to an advantage for whole-list

repetition in ISR, as well as reductions in this effect when repetitions vary

in temporal grouping, in their onsets, or in the order of items. Shared

structure across lists, such as repetition of item-item and item-position

associations, accumulates with sufficient exposure, reflecting

structure-sensitive overlap among the sparse representations. This same

sensitivity discovers the statistical structure within continuous streams of

input, as observed in standard statistical learning paradigms. Analyses show

that the structure of the training environment systematically influences the

degree to which item and position information are represented independently

versus conjunctively, and the resulting representations are broadly consistent

with functional neuroimaging data on changes in representational similarity

during sequential learning. The model shares important properties with a

number of existing models and can be viewed as an integration of them that

accounts for a broader range of phenomena.

Rapid sequence learning.

We have developed a model of rapid sequence learning by the hippocampus, and

applied it to account for repetition effects in immediate serial recall (ISR)

and the discovery of structure in auditory statistical learning. The model

supports one-trial learning of novel sequences through fast predictive

learning from sparse but structure-sensitive hippocampal representations of

items and the contexts in which they occur. In the model, the accumulation of

learning effects across trials gives rise to an advantage for whole-list

repetition in ISR, as well as reductions in this effect when repetitions vary

in temporal grouping, in their onsets, or in the order of items. Shared

structure across lists, such as repetition of item-item and item-position

associations, accumulates with sufficient exposure, reflecting

structure-sensitive overlap among the sparse representations. This same

sensitivity discovers the statistical structure within continuous streams of

input, as observed in standard statistical learning paradigms. Analyses show

that the structure of the training environment systematically influences the

degree to which item and position information are represented independently

versus conjunctively, and the resulting representations are broadly consistent

with functional neuroimaging data on changes in representational similarity

during sequential learning. The model shares important properties with a

number of existing models and can be viewed as an integration of them that

accounts for a broader range of phenomena.

- Nakayama, M., and Plaut, D.C. (submitted). A hippocampal model of

rapid sequence learning applied to immediate serial recall and statistical

learning. Psychological Review.

Neural Representations

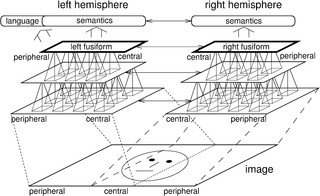

Faces and words.

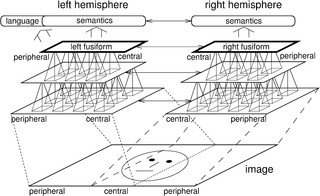

The neural mechanisms supporting visual recognition of faces, words, and other

objects are increasingly conceptualized as a distributed but integrated system

that become organized gradually over the course of development, rather than as

a set of individual, specialized regions subserving particular visual domains

(Behrmann & Plaut, 2013). In understanding the emergence of this

organization, we adopt a specific theoretical perspective in which visual

recognition involves topographically-constrained cooperation and competition

among multiple, interacting regions, each of which is only partially selective

for a specific domain. When applied to faces and words in an explicit

computational simulation (Plaut & Behrmann, 2011), these domains compete to be

near high-acuity visual information in each hemisphere; words become more

left-lateralized to cooperate with language-related information and, in

response, faces subsequently become more right-lateralized. The account thus

makes specific and otherwise unexpected predictions—supported by subsequent

empirical studies (e.g., Behrmann & Plaut, 2014; Nestor, Behrmann & Plaut,

2013; Nestor, Plaut & Behrmann, 2013)—concerning the co-mingling of these two

seemingly unrelated domains over the course of development, in

neurophysiological measures of recognition in both children and adults, and in

graded patterns of impairment in both domains following unilateral brain

damage. The research offers a novel theoretical perspective that has broad

implications for theories of normal and atypical cognitive and neural

development, and for instruction and remediation.

Faces and words.

The neural mechanisms supporting visual recognition of faces, words, and other

objects are increasingly conceptualized as a distributed but integrated system

that become organized gradually over the course of development, rather than as

a set of individual, specialized regions subserving particular visual domains

(Behrmann & Plaut, 2013). In understanding the emergence of this

organization, we adopt a specific theoretical perspective in which visual

recognition involves topographically-constrained cooperation and competition

among multiple, interacting regions, each of which is only partially selective

for a specific domain. When applied to faces and words in an explicit

computational simulation (Plaut & Behrmann, 2011), these domains compete to be

near high-acuity visual information in each hemisphere; words become more

left-lateralized to cooperate with language-related information and, in

response, faces subsequently become more right-lateralized. The account thus

makes specific and otherwise unexpected predictions—supported by subsequent

empirical studies (e.g., Behrmann & Plaut, 2014; Nestor, Behrmann & Plaut,

2013; Nestor, Plaut & Behrmann, 2013)—concerning the co-mingling of these two

seemingly unrelated domains over the course of development, in

neurophysiological measures of recognition in both children and adults, and in

graded patterns of impairment in both domains following unilateral brain

damage. The research offers a novel theoretical perspective that has broad

implications for theories of normal and atypical cognitive and neural

development, and for instruction and remediation.

- Plaut, D.C., and Behrmann, M. (2011).

Complementary neural representations for faces and words: A computational

exploration.

Cognitive Neuropsychology, 28, 251-275.

- Nestor, A., Plaut, D.C., and Behrmann, M. (2011).

Unraveling the distributed neural code of facial identity through

spatiotemporal pattern analysis.

Proceedings of the National Academy of Science USA, 108, 9998-10003.

- Behrmann, M., and Plaut, D.C. (2013).

Distributed circuits, not circumscribed centers, mediate visual cognition.

Trends in Cognitive Sciences, 17, 210-219.

- Nestor, A., Behrmann, M., and Plaut, D.C. (2013).

The neural basis of visual word form processing: A multivariate investigation.

Cerebral Cortex, 23, 1673-1684.

- Behrmann, M., and Plaut, D.C. (2014).

Bilateral hemispheric representation of words and faces: Evidence from word

impairments in prosopagnosia and face impairments in pure alexia.

Cerebral Cortex, 24, 1102-1118.

- Robinson, A.K., Plaut, D.C., and Behrmann, M. (2017).

Word and face processing engage overlapping distributed networks: Evidence

from RSVP and EEG investigations.

Journal of Experimental Psychology: General, 146, 943-961.

doi:10.1037/xge0000302

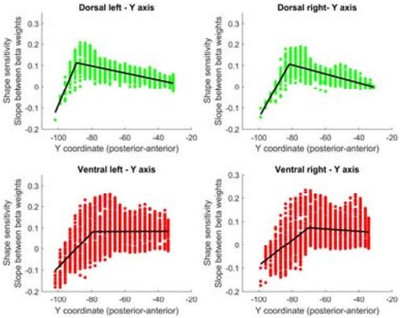

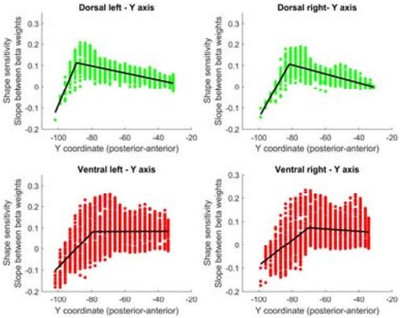

Dorsal object representations.

The cortical visual system is almost universally thought to be segregated into

two anatomically and functionally distinct pathways: a ventral

occipito-temporal pathway that subserves object perception, and a dorsal

occipito-parietal pathway that subserves object localization and visually

guided action. Accumulating evidence from both human and non-human primate

studies, however, challenges this binary distinction and suggests that regions

in the dorsal pathway contain object representations that are independent of

those in ventral cortex and that play a functional role in object perception.

We are exploring the nature of dorsal object representations through a

combination of behavioral, neuropsychological, neuroimaging, and computational

work. We propose a graded functional account of the anatomical organization,

functional contributions and origins of these representations in the service

of perception and action.

Dorsal object representations.

The cortical visual system is almost universally thought to be segregated into

two anatomically and functionally distinct pathways: a ventral

occipito-temporal pathway that subserves object perception, and a dorsal

occipito-parietal pathway that subserves object localization and visually

guided action. Accumulating evidence from both human and non-human primate

studies, however, challenges this binary distinction and suggests that regions

in the dorsal pathway contain object representations that are independent of

those in ventral cortex and that play a functional role in object perception.

We are exploring the nature of dorsal object representations through a

combination of behavioral, neuropsychological, neuroimaging, and computational

work. We propose a graded functional account of the anatomical organization,

functional contributions and origins of these representations in the service

of perception and action.

- Freud, E., Plaut, D.C., and Behrmann, M. (2016).

"What" is happening in the dorsal visual pathway.

Trends in Cognitive Sciences, 20, 773-784.

doi:10.1016/j.tics.2016.08.003

- Freud, E., Culham, J., Plaut, D.C. and Behrmann, M. (2017).

The large-scale organization of shape processing in the ventral and dorsal pathways.

eLife,6, e27576.

doi:10.7554/eLife.27576

- Freud, E., Plaut, D.C. and Behrmann, M. (submitted).

Protracted developmental trajectory of shape processing along the two visual pathways.

eLife.

Much of my early work focused on word reading, both in normal skilled readers

and in brain-damaged patients with acquired reading disorders. Word reading

is a particularly informative domain for studying cognitive processes because

it involves learning to relate multiple sources of information—visual

(orthographic), phonological, and semantic—in a highly skilled manner. My

colleagues and I have developed artificial neural-network (connectionist)

models that exhibit many of the central characteristics of skilled reading,

including the influences of word frequency and spelling-sound consistency on

the time to pronounce words and the ability to pronounce word-like nonsense

letter strings (e.g., MAVE) and to distinguish them from real words in lexical

decision tasks (Plaut, McClelland, Seidenberg & Patterson, 1996). When the

models are damaged in various ways, they exhibit the major forms of acquired

dyslexia, including deep dyslexia, in which patients make semantic errors in

reading aloud (e.g., misreading YACHT as “boat”; Plaut & Shallice, 1993) and

surface dyslexia, in which patients produce regularization errors to exception

words (e.g., misreading YACHT as “yatched”; Woollams, Lambon Ralph, Plaut &

Patterson, 2007). Moreover, retraining the damaged models yields patterns of

recovery and generalization that are qualitatively similar to those found in

cognitive rehabilitation studies and has, in one instance (Plaut, 1996),

generated a specific prediction concerning the design of more effective

therapy for patients that later received direct empirical support (Kiran &

Thompson, 2003, JSLHR).

Much of my early work focused on word reading, both in normal skilled readers

and in brain-damaged patients with acquired reading disorders. Word reading

is a particularly informative domain for studying cognitive processes because

it involves learning to relate multiple sources of information—visual

(orthographic), phonological, and semantic—in a highly skilled manner. My

colleagues and I have developed artificial neural-network (connectionist)

models that exhibit many of the central characteristics of skilled reading,

including the influences of word frequency and spelling-sound consistency on

the time to pronounce words and the ability to pronounce word-like nonsense

letter strings (e.g., MAVE) and to distinguish them from real words in lexical

decision tasks (Plaut, McClelland, Seidenberg & Patterson, 1996). When the

models are damaged in various ways, they exhibit the major forms of acquired

dyslexia, including deep dyslexia, in which patients make semantic errors in

reading aloud (e.g., misreading YACHT as “boat”; Plaut & Shallice, 1993) and

surface dyslexia, in which patients produce regularization errors to exception

words (e.g., misreading YACHT as “yatched”; Woollams, Lambon Ralph, Plaut &

Patterson, 2007). Moreover, retraining the damaged models yields patterns of

recovery and generalization that are qualitatively similar to those found in

cognitive rehabilitation studies and has, in one instance (Plaut, 1996),

generated a specific prediction concerning the design of more effective

therapy for patients that later received direct empirical support (Kiran &

Thompson, 2003, JSLHR).

Traditional theories posit that complex words are composed of discrete units

called morphemes that contribute systematically to their meanings (e.g.,

TEACH+ER, GOVERN+MENT), but some words are awkward on this account (e.g.,

DRESS+ER is not someone who dresses; MOTH+ER, FATH+ER, SIST+ER, BROTH+ER and

all agents but the remaining parts are not coherent units). On a distributed

connectionist approach, however, morphology reflects a learned sensitivity to

the graded degree of systematicity among the surface forms of words and their

meanings, without the need to posit discrete segmentation. Explicit

simulations demonstrate that, in accordance with empirical findings (e.g.,

Velan, Frost, Deustch & Plaut, 2005), the degree of sensitivity to apparent

morphological structure in the absence of semantic similarity (e.g., BROTH+ER)

depends on the overall morphological richness of the language as a whole

(Plaut & Gonnerman, 2000). More generally, insights drawn from the

connectionist perspective on morphology and its debate with “rule-based”

accounts---in particular, the English past-tense system---have been

assimilated into many areas in the study of language, changing the focus of

research from abstract characterizations of linguistic competence to an

emphasis on the role of the statistical structure of language in acquisition

and processing (Seidenberg & Plaut, 2014).

Traditional theories posit that complex words are composed of discrete units

called morphemes that contribute systematically to their meanings (e.g.,

TEACH+ER, GOVERN+MENT), but some words are awkward on this account (e.g.,

DRESS+ER is not someone who dresses; MOTH+ER, FATH+ER, SIST+ER, BROTH+ER and

all agents but the remaining parts are not coherent units). On a distributed

connectionist approach, however, morphology reflects a learned sensitivity to

the graded degree of systematicity among the surface forms of words and their

meanings, without the need to posit discrete segmentation. Explicit

simulations demonstrate that, in accordance with empirical findings (e.g.,

Velan, Frost, Deustch & Plaut, 2005), the degree of sensitivity to apparent

morphological structure in the absence of semantic similarity (e.g., BROTH+ER)

depends on the overall morphological richness of the language as a whole

(Plaut & Gonnerman, 2000). More generally, insights drawn from the

connectionist perspective on morphology and its debate with “rule-based”

accounts---in particular, the English past-tense system---have been

assimilated into many areas in the study of language, changing the focus of

research from abstract characterizations of linguistic competence to an

emphasis on the role of the statistical structure of language in acquisition

and processing (Seidenberg & Plaut, 2014).

Optic aphasia.

A longstanding debate regarding the representation of semantic knowledge is

whether such knowledge is represented in a single, amodal system or whether it

is organized into multiple subsystems based on modality of input or type of

information. A distributed connectionist perspective offers a middle ground,

in which semantic representations develop under the pressure of learning to

mediate between multiple input and output modalities in performing various

tasks, under a constraint to minimize connection length (and, hence, overall

axon volume). An implemented model provides a quantitative account of optic

aphasia---a selective deficit in naming visually presented objects---following

damage to connections from vision to regions of semantics near phonology

(Plaut, 2002). Additional implementations of the process by which visual

representations activate semantics account for 1) detailed patterns of

semantic priming and how these vary across individuals over the course of

development (Plaut & Booth, 2000); and 2) distinct patterns of impairment in word

and picture comprehension reflecting “access” versus “degraded-store” deficits

(Gotts & Plaut, 2002).

Optic aphasia.

A longstanding debate regarding the representation of semantic knowledge is

whether such knowledge is represented in a single, amodal system or whether it

is organized into multiple subsystems based on modality of input or type of

information. A distributed connectionist perspective offers a middle ground,

in which semantic representations develop under the pressure of learning to

mediate between multiple input and output modalities in performing various

tasks, under a constraint to minimize connection length (and, hence, overall

axon volume). An implemented model provides a quantitative account of optic

aphasia---a selective deficit in naming visually presented objects---following

damage to connections from vision to regions of semantics near phonology

(Plaut, 2002). Additional implementations of the process by which visual

representations activate semantics account for 1) detailed patterns of

semantic priming and how these vary across individuals over the course of

development (Plaut & Booth, 2000); and 2) distinct patterns of impairment in word

and picture comprehension reflecting “access” versus “degraded-store” deficits

(Gotts & Plaut, 2002).

Semantic ambiguity.

The meanings of most words depend on the context in

which they occur (e.g.,

Semantic ambiguity.

The meanings of most words depend on the context in

which they occur (e.g.,  N400.

The study of the N400 event-related brain potential has provided fundamental

insights into the nature of real-time comprehension processes, and its

amplitude is modulated by a wide variety of stimulus and context factors. It

is generally thought to reflect the difficulty of semantic access, but

formulating a precise characterization of this process has proved difficult.

Laszlo and colleagues (Laszlo & Plaut, 2012, Brain and Language,

120, 271-281; Laszlo & Armstrong, 2014, Brain and Language,

132, 22-27) used physiologically constrained neural networks to model the N400

as transient over-activation within semantic representations, arising as a

consequence of the distribution of excitation and inhibition within and between

cortical areas. The current work extends this approach to successfully model

effects on both N400 amplitudes and behavior of word frequency, semantic

richness, repetition, semantic and associative priming, and orthographic

neighborhood size. The account is argued to be preferable to one based on

"semantic prediction error" (Rabovsky & McRae, 2014, Cognition,

132, 68-98) for a number of reasons, the most fundamental of which is

that the current model actually produces N400-like waveforms in its real-time

activation dynamics.

N400.

The study of the N400 event-related brain potential has provided fundamental

insights into the nature of real-time comprehension processes, and its

amplitude is modulated by a wide variety of stimulus and context factors. It

is generally thought to reflect the difficulty of semantic access, but

formulating a precise characterization of this process has proved difficult.

Laszlo and colleagues (Laszlo & Plaut, 2012, Brain and Language,

120, 271-281; Laszlo & Armstrong, 2014, Brain and Language,

132, 22-27) used physiologically constrained neural networks to model the N400

as transient over-activation within semantic representations, arising as a

consequence of the distribution of excitation and inhibition within and between

cortical areas. The current work extends this approach to successfully model

effects on both N400 amplitudes and behavior of word frequency, semantic

richness, repetition, semantic and associative priming, and orthographic

neighborhood size. The account is argued to be preferable to one based on

"semantic prediction error" (Rabovsky & McRae, 2014, Cognition,

132, 68-98) for a number of reasons, the most fundamental of which is

that the current model actually produces N400-like waveforms in its real-time

activation dynamics.

Routine action.

In everyday tasks, selecting actions in the proper sequence requires a

continuously updated representation of temporal context. Many existing models

address this problem by positing a hierarchy of processing units, mirroring

the roughly hierarchical structure of naturalistic tasks themselves. Although

intuitive, such an approach has led to a number of difficulties, including a

reliance on overly rigid sequencing mechanisms and a limited ability to

address both learning and context sensitivity in behavior. A sequential

neural network, by contrast, can to deal flexibly with a complex set of

sequencing constraints, encoding contextual information at multiple

time-scales within a single, distributed internal representation (Botvinick &

Plaut, 2004). The model not only accounts for skilled action performance, but

also everyday “slips of action” that normal individuals commit under

distraction, as well as more severe degradation in performance following

damage, as observed in ideational apraxia. An analogous model in the domain

of language acquisition and processing. accounts for the integration of

semantic and syntactic constraints on sentence processing (Rohde & Plaut,

1999). Finally, the same type of model, at a shorter timescale, provides a

parsimonious account for numerous benchmark phenomena in the domain of

immediate serial recall (Botvinick & Plaut, 2006), including data that have

been considered to preclude the application of neural networks in this domain.

Unlike most competing accounts, the model deals naturally with findings

concerning the role of background knowledge in serial recall, and makes

contact with relevant neuroscientific data.

Routine action.

In everyday tasks, selecting actions in the proper sequence requires a

continuously updated representation of temporal context. Many existing models

address this problem by positing a hierarchy of processing units, mirroring

the roughly hierarchical structure of naturalistic tasks themselves. Although

intuitive, such an approach has led to a number of difficulties, including a

reliance on overly rigid sequencing mechanisms and a limited ability to

address both learning and context sensitivity in behavior. A sequential

neural network, by contrast, can to deal flexibly with a complex set of

sequencing constraints, encoding contextual information at multiple

time-scales within a single, distributed internal representation (Botvinick &

Plaut, 2004). The model not only accounts for skilled action performance, but

also everyday “slips of action” that normal individuals commit under

distraction, as well as more severe degradation in performance following

damage, as observed in ideational apraxia. An analogous model in the domain

of language acquisition and processing. accounts for the integration of

semantic and syntactic constraints on sentence processing (Rohde & Plaut,

1999). Finally, the same type of model, at a shorter timescale, provides a

parsimonious account for numerous benchmark phenomena in the domain of

immediate serial recall (Botvinick & Plaut, 2006), including data that have

been considered to preclude the application of neural networks in this domain.

Unlike most competing accounts, the model deals naturally with findings

concerning the role of background knowledge in serial recall, and makes

contact with relevant neuroscientific data.

Statistical learning.

Statistical learning is often cast as a means of discovering the units of

perception, such as words and objects, and representing them as explicit

"chunks". However, entities are not undifferentiated wholes but often

contain parts that contribute systematically to their meanings. Studies of

incidental auditory or visual statistical learning suggest that, as

participants learn about wholes they become insensitive to parts embedded

within them (Fiser & Aslin, 2005; Giroux & Rey, 2009), but this seems

difficult to reconcile with a broad range of findings in which parts and

wholes work together to contribute to behavior. In the current work, we adopt

a computational approach, based on learning in artificial neural networks,

that is capable of capturing statistical structure at multiple levels of

representation simultaneously and yet eschews the notion of explicit chunks.

Rather, the extent to which a particular subset of the input in a particular

context is represented in a coherent manner is a matter of degree, and the

extent to which structure at one level of analysis cooperates or competes with

structure at other levels is not prespecified but arises naturally through

incidental learning. We show that the approach accounts for a wide range of

findings concerning the relationship between parts and wholes in auditory and

visual statistical learning, including some previously thought to be

problematic for neural network approaches.

Statistical learning.

Statistical learning is often cast as a means of discovering the units of

perception, such as words and objects, and representing them as explicit

"chunks". However, entities are not undifferentiated wholes but often

contain parts that contribute systematically to their meanings. Studies of

incidental auditory or visual statistical learning suggest that, as

participants learn about wholes they become insensitive to parts embedded

within them (Fiser & Aslin, 2005; Giroux & Rey, 2009), but this seems

difficult to reconcile with a broad range of findings in which parts and

wholes work together to contribute to behavior. In the current work, we adopt

a computational approach, based on learning in artificial neural networks,

that is capable of capturing statistical structure at multiple levels of

representation simultaneously and yet eschews the notion of explicit chunks.

Rather, the extent to which a particular subset of the input in a particular

context is represented in a coherent manner is a matter of degree, and the

extent to which structure at one level of analysis cooperates or competes with

structure at other levels is not prespecified but arises naturally through

incidental learning. We show that the approach accounts for a wide range of

findings concerning the relationship between parts and wholes in auditory and

visual statistical learning, including some previously thought to be

problematic for neural network approaches.

Rapid sequence learning.

We have developed a model of rapid sequence learning by the hippocampus, and

applied it to account for repetition effects in immediate serial recall (ISR)

and the discovery of structure in auditory statistical learning. The model

supports one-trial learning of novel sequences through fast predictive

learning from sparse but structure-sensitive hippocampal representations of

items and the contexts in which they occur. In the model, the accumulation of

learning effects across trials gives rise to an advantage for whole-list

repetition in ISR, as well as reductions in this effect when repetitions vary

in temporal grouping, in their onsets, or in the order of items. Shared

structure across lists, such as repetition of item-item and item-position

associations, accumulates with sufficient exposure, reflecting

structure-sensitive overlap among the sparse representations. This same

sensitivity discovers the statistical structure within continuous streams of

input, as observed in standard statistical learning paradigms. Analyses show

that the structure of the training environment systematically influences the

degree to which item and position information are represented independently

versus conjunctively, and the resulting representations are broadly consistent

with functional neuroimaging data on changes in representational similarity

during sequential learning. The model shares important properties with a

number of existing models and can be viewed as an integration of them that

accounts for a broader range of phenomena.

Rapid sequence learning.

We have developed a model of rapid sequence learning by the hippocampus, and

applied it to account for repetition effects in immediate serial recall (ISR)

and the discovery of structure in auditory statistical learning. The model

supports one-trial learning of novel sequences through fast predictive

learning from sparse but structure-sensitive hippocampal representations of

items and the contexts in which they occur. In the model, the accumulation of

learning effects across trials gives rise to an advantage for whole-list

repetition in ISR, as well as reductions in this effect when repetitions vary

in temporal grouping, in their onsets, or in the order of items. Shared

structure across lists, such as repetition of item-item and item-position

associations, accumulates with sufficient exposure, reflecting

structure-sensitive overlap among the sparse representations. This same

sensitivity discovers the statistical structure within continuous streams of

input, as observed in standard statistical learning paradigms. Analyses show

that the structure of the training environment systematically influences the

degree to which item and position information are represented independently

versus conjunctively, and the resulting representations are broadly consistent

with functional neuroimaging data on changes in representational similarity

during sequential learning. The model shares important properties with a

number of existing models and can be viewed as an integration of them that

accounts for a broader range of phenomena.

Faces and words.

The neural mechanisms supporting visual recognition of faces, words, and other

objects are increasingly conceptualized as a distributed but integrated system

that become organized gradually over the course of development, rather than as

a set of individual, specialized regions subserving particular visual domains

(Behrmann & Plaut, 2013). In understanding the emergence of this

organization, we adopt a specific theoretical perspective in which visual

recognition involves topographically-constrained cooperation and competition

among multiple, interacting regions, each of which is only partially selective

for a specific domain. When applied to faces and words in an explicit

computational simulation (Plaut & Behrmann, 2011), these domains compete to be

near high-acuity visual information in each hemisphere; words become more

left-lateralized to cooperate with language-related information and, in

response, faces subsequently become more right-lateralized. The account thus

makes specific and otherwise unexpected predictions—supported by subsequent

empirical studies (e.g., Behrmann & Plaut, 2014; Nestor, Behrmann & Plaut,

2013; Nestor, Plaut & Behrmann, 2013)—concerning the co-mingling of these two

seemingly unrelated domains over the course of development, in

neurophysiological measures of recognition in both children and adults, and in

graded patterns of impairment in both domains following unilateral brain

damage. The research offers a novel theoretical perspective that has broad

implications for theories of normal and atypical cognitive and neural

development, and for instruction and remediation.

Faces and words.

The neural mechanisms supporting visual recognition of faces, words, and other

objects are increasingly conceptualized as a distributed but integrated system

that become organized gradually over the course of development, rather than as

a set of individual, specialized regions subserving particular visual domains

(Behrmann & Plaut, 2013). In understanding the emergence of this

organization, we adopt a specific theoretical perspective in which visual

recognition involves topographically-constrained cooperation and competition

among multiple, interacting regions, each of which is only partially selective

for a specific domain. When applied to faces and words in an explicit

computational simulation (Plaut & Behrmann, 2011), these domains compete to be

near high-acuity visual information in each hemisphere; words become more

left-lateralized to cooperate with language-related information and, in

response, faces subsequently become more right-lateralized. The account thus

makes specific and otherwise unexpected predictions—supported by subsequent

empirical studies (e.g., Behrmann & Plaut, 2014; Nestor, Behrmann & Plaut,

2013; Nestor, Plaut & Behrmann, 2013)—concerning the co-mingling of these two

seemingly unrelated domains over the course of development, in

neurophysiological measures of recognition in both children and adults, and in

graded patterns of impairment in both domains following unilateral brain

damage. The research offers a novel theoretical perspective that has broad

implications for theories of normal and atypical cognitive and neural

development, and for instruction and remediation.

Dorsal object representations.

The cortical visual system is almost universally thought to be segregated into

two anatomically and functionally distinct pathways: a ventral

occipito-temporal pathway that subserves object perception, and a dorsal

occipito-parietal pathway that subserves object localization and visually

guided action. Accumulating evidence from both human and non-human primate

studies, however, challenges this binary distinction and suggests that regions

in the dorsal pathway contain object representations that are independent of

those in ventral cortex and that play a functional role in object perception.

We are exploring the nature of dorsal object representations through a

combination of behavioral, neuropsychological, neuroimaging, and computational

work. We propose a graded functional account of the anatomical organization,

functional contributions and origins of these representations in the service

of perception and action.

Dorsal object representations.

The cortical visual system is almost universally thought to be segregated into

two anatomically and functionally distinct pathways: a ventral

occipito-temporal pathway that subserves object perception, and a dorsal

occipito-parietal pathway that subserves object localization and visually

guided action. Accumulating evidence from both human and non-human primate

studies, however, challenges this binary distinction and suggests that regions

in the dorsal pathway contain object representations that are independent of

those in ventral cortex and that play a functional role in object perception.

We are exploring the nature of dorsal object representations through a

combination of behavioral, neuropsychological, neuroimaging, and computational

work. We propose a graded functional account of the anatomical organization,

functional contributions and origins of these representations in the service

of perception and action.